Power Query is an excellent tool for data mashup; however, when it comes to showing its powers as a desktop ETL tool, you realise that no matter how powerful at E and T it is, the L is falling behind, as you can only load the data into the embedded tabular data model that comes with Power BI.

Ignoring R, local cubes and HTTP POST requests, I wanted to showcase a few other methods for exporting data from Power Query and loading it to SQL Server.

The logic I follow is straightforward — because there is no apparent limitation to the type of queries you can run against OleDb or ADO.NET datasets — a SELECT query will be executed with the same success as an INSERT query.

As a prerequisite, I’ve created a table that I populate initially with single records on the SQL Server side, then with 1M records.

| CREATE TABLE [dbo].[Demo]( | |

| [ID] [int] IDENTITY(1,1) NOT NULL, | |

| [Key] [int] NULL, | |

| [Value] [varchar](50) NULL | |

| ) |

OleDb

Following the logic described above, inserting a single entry into Demo table via OleDb looks relatively trivial.

| = OleDb.DataSource( | |

| "provider=SQLOLEDB.1;data source=.;database=QueryPower", | |

| [Query=Text.Format("INSERT INTO [dbo].[Demo] VALUES(#{0},'#{1}')",{1,"A"})]) |

However, if you try to follow this approach, you’ll soon notice that in addition to fiddling around with Text.Format to get your INSERT statement right, you will also have to approve each parameter variation from your SQL statement. This makes the approach unfeasible unless you construct your INSERT statement to include all millions of records in a single string.

ADO.NET

On the other hand, an ADO.NET connection wrapped in a Value.NativeQuery function provides a more familiar approach to performing data INSERT. This method has the convenience of passing query parameters as a record or list inside the SQL statement. As a result, you need to approve the firewall rule for the SQL query only once.

| = Value.NativeQuery( | |

| Sql.Database(".", "QueryPower"), | |

| "INSERT INTO [dbo].[Demo] VALUES(@key,@value)", | |

| [key=10,value="A"]) |

Changing the scale

The methods I’ve described so far work reasonably well when you need to insert a few hundred records in a SQL table. However, when the problem explodes to millions of rows, you need to do some prep work before sending over all of that data. I’ll detail three techniques for exporting datasets with millions of records: Table Valued Parameters, XML and JSON.

For example, I use a 1M records table I generate from a list with an incremental sequence Key and GUID generated Value field. Table dummy

| = Table.FromRecords( | |

| List.Generate(()=> [ key = 0, value = Text.NewGuid()], | |

| each [key] < 1000000, | |

| each [key = [key]+1, | |

| value = Text.NewGuid()])) |

TVP

When doing bulk inserts in ADO.NET, the most recommended approach is to use a Table Valued Parameter to send data to a Stored Procedure that performs the INSERT. As a prerequisite on SQL Server side, I had to add a table type and a stored procedure that accepts this table type as a READONLY parameter.

| CREATE PROC [dbo].[usp_InsertDemoXML] @xmlBin AS varbinary(max) | |

| AS | |

| BEGIN | |

| declare @xml xml = convert(xml,@xmlBin) | |

| INSERT INTO Demo ([Key],[Value]) | |

| SELECT | |

| [key] = x.v.value('key[1]','int'), | |

| [Value] = x.v.value('value[1]','varchar(50)') | |

| FROM @xml.nodes('/table/row') x(v) | |

| END |

Although sending a TVP into an SP is a straightforward task in .NET, it requires casting the data reader (table) to a SqlDbType.Structured data type.

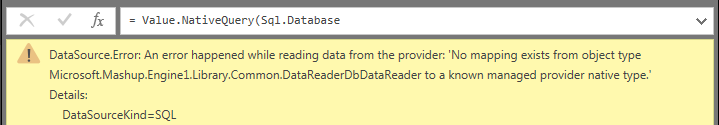

I wanted to rely on Power Query internal data type conversion and sent a table as a parameter to my newly created SP. Needless to say I was greeted with the error below:

After giving up on the idea of casting a Power Query table into a SQL Structured data type, I’ve decided to replicate the internal call during an exec SP with @TVP. Thus I’ve built a rather lengthy 1002 row SQL statement that declares an internal table, inserts all the records from the dummy table and calls the SP with an internal table as a parameter

| let | |

| //building the Table Value Constructor for a record | |

| rows = (t) => Table.TransformRows(t, each "("&Text.From([key])&",'"&[value]&"')"), | |

| //group entries in sets of 1000 because Table Value Constructor have a limitation of 1000 entries | |

| values = List.Generate(()=>0, | |

| (i)=>i<1000, | |

| (i)=>i+1, | |

| (i)=>Text.Combine(rows(Table.Range(dummy,i*1000,1000)),",")), | |

| //adding the declaration of TVP and the call to SP that uses TVP | |

| TVP = Text.Combine({"declare @p1 dbo.DemoList #(lf)"}& | |

| List.Transform(values, each "insert into @p1 values"&_&"#(lf)") | |

| &{"#(lf)exec dbo.usp_InsertDemoTVP @TVP=@p1"}), | |

| //call the lenghty SQL statement | |

| out = Value.NativeQuery(Sql.Database(".", "QueryPower"),TVP) | |

| in | |

| out |

XML

Back in the days when XML was still cool, sending some tabular data to SQL Server was the matter of serializing a recordset into an XML document and sending it to a stored procedure that parsed it back into a recordset. Nothing has changed since on the SQL Server side.

| CREATE PROC [dbo].[usp_InsertDemoXML] @xmlBin AS varbinary(max) | |

| AS | |

| BEGIN | |

| declare @xml xml = convert(xml,@xmlBin) | |

| INSERT INTO Demo ([Key],[Value]) | |

| SELECT | |

| [key] = x.v.value('key[1]','int'), | |

| [Value] = x.v.value('value[1]','varchar(50)') | |

| FROM @xml.nodes('/table/row') x(v) | |

| END |

Since Power Query lacks any functions for constructing XML documents, I found it very difficult to come up with an approach for building binary XML documents that perform on par with JSON serialization. I’ve tried various combinations of looping through the records of a table via List.Generate and List.Accumulate, nevertheless Table.TransformRows and avoiding making any calls to Text.Format seemed to deliver the best performance.

| let | |

| //Transform each row of [dummy] table into a binary XML | |

| rows = Table.TransformRows(dummy, | |

| each Binary.Combine({Text.ToBinary("<row><key>") | |

| ,Text.ToBinary(Text.From([key])) | |

| ,Text.ToBinary("</key><value>") | |

| ,Text.ToBinary([value]) | |

| ,Text.ToBinary("</value></row>")})), | |

| //Wrapping up all the rows in <table> tags | |

| out = Binary.Combine({Text.ToBinary("<table>")}& | |

| rows& | |

| {Text.ToBinary("</table>")}) | |

| in | |

| out |

On calling Value.NativeQuery, Power Query converts the binary string into SQL varbinary(max) data type, thus for the final call, it requires only mapping the placeholder to the binary XML document.

| let | |

| out = Value.NativeQuery(Sql.Database(".", "QueryPower"),"usp_InsertDemoXML @xmlBin",[xmlBin=dummy2XML]) | |

| in | |

| out |

JSON

Since SQL Server 2016 introduction of JSON support, sending and receiving data to/from SQL Server got a lot easier. Power BI is no exception, sending data to a SQL Server table requires addition of a SP with JSON parameter and on Power Query side serializing the dataset as a text bases JSON object with Json.FomValue.

| CREATE PROC [dbo].[usp_InsertDemoJSON] @json AS nvarchar(max) | |

| AS | |

| BEGIN | |

| INSERT INTO Demo ([Key],[Value]) | |

| SELECT * | |

| FROM OPENJSON(@json) | |

| WITH ([Key] int, [Value] varchar(50)) | |

| END |

| let | |

| //serialize table [dummy] into a JSON object | |

| json = Text.FromBinary(Json.FromValue(dummy)), | |

| //pass JSON object to SP that unwrapps it as a recordset & performs INSERT operation | |

| out = Value.NativeQuery(Sql.Database(".", "QueryPower"),"usp_InsertDemoJSON @json",[json=json]) | |

| in | |

| out |

Because of native JSON support on both SQL and Power BI sides, this was by far the most easy and fast way of sending data across.

there is also such a thing.

https://ruiromanoblog.wordpress.com/2016/04/21/use-power-bi-desktop-as-an-etl-tool/

LikeLike

Although using PowerBIETL is a valid choice, I don’t like the approach as it relies on PowerShell. My purpose for this post was to demo how Power Query can be used for data extraction as well as data ingestion to other sources than the $Embedded$ cube

LikeLike

Thank you for this. When I replicate your examples, sometimes the table gets inserted twice (2m rows). Any idea how to prevent this?

LikeLike

Hi Matt, that’s a very good question.

You are getting the duplicate records because Power Query executes the M script twice, one for preview, assuming that you preview the results of the script and once when it loads the query into the data model.

You can solve this problem in a couple of ways. One would be to put the appropriate insert logic on the SQL side. One such mechanism could be to check on insert if the records are already present or not. Or have a flag which controls if the records get inserted or not. This could be set for example only after you’ve clicked apply and close in the Power Query Editor and refreshed your model.

Another approach would be to run the script in the preview window of Power BI, and then disable the query.

A more convoluted approach would be to set an “Insert Parameter” as a slicer in Power BI Report view. Then have another Power Query script that reads the slicer selection and sets a Power Query parameter that controls insertion of data.

…And the list goes on 🙂

LikeLike

I wonder if there is a way to use a text odbc driver to write the query output as a csv. Ideally in 10k or so chunks. Then you could define your transformation jobs as excel embedded pq’s and write a simple python program to schedule, run and load csv to destinations in a more reliable etlish way.

LikeLike

I’ve tried this approach and it has limitations. At least while trying to insert data into a file via OleDB route. The file has to exist. Value.NativeQuery doesn’t support the Microsoft.ACE.OLEDB.16.0 driver. And the list of problems goes on. If you want to play around with this approach have a go at the following command

= OleDb.Query(“Data Source=C:\code;Provider=Microsoft.ACE.OLEDB.16.0;Extended Properties=””Text;HDR=No;”””,”INSERT INTO [abc.csv] VALUES (1)”)

The file abc.csv has to exist beforehand. And also you need to instantiate it with a newline (header).

In my opinion a more promising approach if you want to insert data into something and later read it from a file would be to use SQL’s FileTables

https://docs.microsoft.com/en-us/sql/relational-databases/blob/filetables-sql-server

LikeLike

Just in case there are any more PowerBI newbies like me out there, a note that had me stumped for a while: The final example uses “Json.ValueFrom” which will come up as a syntax error. It doesn’t exist. Spent some time googling and found nothing. After some trial and error I hit upon the answer… it should read “Json.FromValue”.

LikeLiked by 1 person

Thanks Sean.

LikeLike